How I Do Off-Site Cloud Backup

- Miscellaneous

- Published Nov 9, 2011 Updated Dec 3, 2011

In my ongoing quest for the perfect way to back up my personal data I have tweaked the setup yet again. Most of what I wrote nearly three years ago (How to Build a State of the Art Backup System for Your Personal Data) still stands, so I will focus on off-site cloud backup in this article.

Many people back up to an external hard drive they keep near their PC or even - god forbid - connected and running all the time. Local backup is a nice start, but with that alone you are nowhere near the finish line yet. If the data is of any importance, an additional layer of security is mandatory: off-site storage. If you are not sure why, consider the long list of calamities that threaten not only your master copy but also its backup in the same room: fire, water, burglary, little children playing, and so on.

Over the years I implemented several different off-site backup strategies. I started out with an extension of the backup mechanism described in my earlier article.

Off-Site Backup Method #1: External Hard Disk

If you are already doing a robocopy backup to an external hard disk, what is easier than to take that hard disk with all your data and put it in a safe place, far away from your PC? The homes of parents, friends or children are good candidates.

That was my first go at an off-site backup. It is dead simple but only stores a snapshot from one point in time. It starts to become obsolete the moment you create it. In order to update the backup you either need a new hard disk or go fetch the drive from your parents/friends, update it and bring it back. A little too cumbersome to do it often. An additional downside: since you are likely to deposit the backup drive somewhere nearby (i.e. in the same town), natural disasters like the New Orleans flood could destroy both the original and the backup.

Last but not least this method is not “cool” - it does not have cloud in it. Eventually I moved on.

Off-Site Backup Method #2: Backup as a Service

From the numerous companies that offer backup as a service, I first tried Mozy, but found out after uploading around 200 GB (which took several months) that migrating to a new computer does not work. Thus motivated to look for alternatives I settled on IDrive and used it for some time.

As any other backup as a service company IDrive offers a combination of cloud storage, transmission protocol and client software. Each vendor creates his own proprietary solution, incompatible to anything else. You cannot, for example, use a different client with IDrive’s storage. Ultimately that was the reason for me to change yet again.

Although IDrive generally works well enough, its Windows client is horrible. It installs to C:\IDrive by default and uses a weird user interface toolkit that is just - different.

IDrive has a neat function called “continuous backup” that sounds great in theory but in practice causes far too much IO load for my taste. After I had identified that as the reason of the high hard disk activity I was experiencing I disabled continuous backup completely, but now I was at a point where I used IDrive just like a traditional backup program, except that it stored to a proprietary cloud, preventing me from changing the client software.

I realized what I want is a good, old-fashioned backup program, but modern enough to use cloud storage as a backup target. A tool I can run when I feel like it but which does not impact my system when inactive. I moved on.

Off-Site Backup Method #3: Storage as a Service

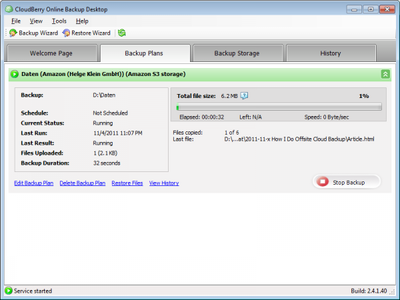

I decided to use the de facto standard for cloud storage, Amazon’s Simple Storage Service. Because of its public APIs S3 is very popular and many applications know how to talk to it. Next I looked for a backup program that adheres to my high quality standards and works with S3. I tried Cloudberry Backup and was astonished that I found nothing to dislike.

Cloudberry Backup is a very solid product, well-engineered, with all the right configuration options but not so many features that quality suffers or the user interface becomes cluttered. It works with 12 different cloud storage services, among them Amazon S3 and Microsoft Azure. It supports encryption with a self-chosen key, compression, multiple file versions, bandwidth limitation that really works and block-level transfer to save time and bandwidth. And it is device-independent: if you buy a new computer and transfer your data over, Cloudberry Backup picks up right where it left off on the old machine.

This is where I am now. I have all my data on S3 (except for the videos), a very nice tool to put new and changed stuff there or restore data if the need arises, and my computer is free of IO/CPU/memory hungry background processes. IT as it should be. All this goodness cost me $ 9.39 for Amazon S3 storage and transfer in the first month (not using the cheaper reduced redundancy storage). That is an amount I am perfectly happy with.

By the way, I came to know Cloudberry Backup because its author commented on my blog post about Amazon’s CloudFront CDN, mentioning his free tool Cloudberry Explorer for Amazon S3. Nice marketing effort, Andy ;-)

One More Thing

Another nice backup tool I want to mention is HardlinkBackup. It is very useful for backing up from the internal hard disk to an external drive. The neat thing about it is that it creates an new mirror of the directory structure on the target device each time it is run. Files that are already present in an older backup folder are hard-linked to, new or changed files are copied. Unfortunately the website is German only at the time of writing.

Comments