Why Disabling the Creation of 8.3 DOS File Names Will Not Improve Performance. Or Will It?

- Windows Internals

- Published Sep 22, 2008

It is a common practice amongst administrators to disable the creation of short filenames on NTFS. I freely admit to have recommended this in the past. Was I wrong?

Background

NTFS is relatively relaxed about file names. They can be quite long (255 characters) and may contain “strange” characters (nearly all UNICODE characters are allowed). Today this is taken for granted, but when NTFS was conceived, many applications made assumptions about the length of file names and about the characters allowed in them. Those were either old or badly written applications, but the operating system had to support them nonetheless.

For that best of all reasons, backwards compatibility, NTFS has an interesting ability: it can store multiple names per file. In practice, it stores one file name if the name is valid as both a DOS and Win32 name. If, however, the “real” (Win32) file name is too exotic for the strict 8.3 rule of DOS, a shortened version is stored alongside with it. Whenever a file or directory name gets changed in any way, the short name must again be derived from it and written to disk. It is only logical that many try to avoid this time-consuming operation in the file system. Is it, really?

Beneath the Covers

With NTFS, everything is a file. Even the master file table (MFT), its highly advanced grandchild of MSDOS’s file allocation table (FAT). The MFT is comprised of file records of 1 KB size each. File records in turn contain the standard file properties like date/time, attributes, names and so on. Even a file’s data is stored in the file record if there is little enough of it to fit into what remains of the 1 KB after the standard fields have been written.

Now how does all this relate to the claim made in the article title?

A file record is a tightly packed structure. During the creation of a file, the various fields stored in that structure need to be compiled in memory and written to disk anyway. During a rename, at least the long file name needs to be replaced. This requires at least one disk IO. Whether the amount of data written during that single IO is increased by 12 bytes (the length of an 8.3 file name) simply does not matter.

Although the creation of 8.3 names cannot degrade disk performance, some might argue that there is still increased work for the CPU to do. After all, the short file name needs to be derived from the long name. That certainly is an operation performed by the CPU. It needs to shorten the name and convert it to uppercase. That, however, is an operation so simple that its effects on performance are simply not measureable.

Missing Pieces?!

My reasoning above seems to prove conclusively that the additional creation of short file names is not harmful to performance at all. But some pieces of the puzzle are still missing.

The file system needs to make sure that the resulting short name is unique. To ensure uniqueness of the resulting name, it must scan all other elements in the current directory. That sounds like an expensive operation, but it isn’t, at least not if each directory entry has only one name. By organizing directory entries in B+ trees they are kept sorted whilst minimizing disk IOs for name lookups. B+ trees are very efficient, but they are also one-dimensional: to keep both short and long names sorted two trees would be required, yet NTFS employs only one. This creates additional disk IOs during the derivation of short from long names.

File system searches are a second reason why the additional creation of 8.3 names might impact performance. The Win32 API functions FindFirstFile/FindNextFile are used extensively by applications. Again, the B+ trees can significantly speed up searches, but additional directory queries are required when two different namespaces are in use.

Without the cache manager, the effects of short file names on the number of disk IOs and thus system performance would probably be dramatic. But it is important to keep in mind that Windows uses efficient disk caching that greatly reduces the number of times clusters need to be fetched from a physical disk. The MFT is certainly a file that is worth caching.

Conclusion

The one thing that really affects system performance is fetching data from disk. In my analysis I have found cases in which the creation and maintenance of short file names causes additional hard disk IOs. These effects are mitigated by the operating system’s caching mechanisms, though. The performance benefits of disabling the creation of 8.3 names are probably small. I do not think that is worth breaking backwards compatibility. As always, actual numbers may vary greatly depending on workload, hardware and other factors.

Disclaimer

This article contains a theoretical analysis. I have not undertaken practical tests in this matter.

References

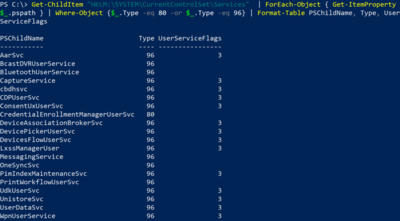

Many web sites describe how to disable the creation of short 8.3 file names by setting the following registry value:

HKLM\System\CurrentControlSet\Control\FileSystem\NtfsDisable8Dot3NameCreation=1 [DWORD]

Here is one of them.

KB article 130694 states that maintaining short and long names can adversely affect NTFS performance. But it applies to Windows NT 3.1 and 3.5 only and is kept relatively vague.

Update

Included B+ trees in the analysis and rephrased the conclusion.

Comments